Supervised Learning Workflow and Algorithms- MATLAB & Simulink

Content

Depending on the task to be performed (e.g., detection, segmentation, classification, monitoring, prediction or prognosis), the preferred strategy for cohorting may differ. For example, if the aim of a study is to predict the tumor stage, availability of tissue specimens with appropriate treatment response grade scores may be required for cohorting. Subsequently, retrospective retrieval of imaging examinations that will be required to serve as the index test. Multi-disciplinary team building refers to a process where people from different fields and levels of expertise are gathered to share their knowledge and collaborate on a joint project.

You can also automate transfer learning, network architecture search, data pre-processing, and advanced pre-processing involving data encoding, cleaning, and verification. This process of choosing the right candidate ML model depends on model complexity, performance, resources available, and maintainability.

Types of learning

Our goal is to provide a sense for the overarching flow of a deep learning project, from data acquisition and preprocessing to evaluation and hyperparameter tuning. Perform a cost-sensitive test by using the compareHoldout or testcholdout function. Both functions statistically compare the predictive performance of two classification models by including a cost matrix in the analysis. In classification, the goal is to assign a class from a finite set of classes to an observation. Applications include spam filters, advertisement recommendation systems, and image and speech recognition. Predicting whether a patient will have a heart attack within a year is a classification problem, and the possible classes are true and false. Classification algorithms usually apply to nominal response values.

It is good practice to test for and remove outliers, remove unnecessary features, fill-in missing data, and filter out noisy examples. A machine learning pipeline is an automated way to execute the machine learning workflow. If the model is not performing well up to our expectations, we can rebuild the model using a more complex parameter called hyperparameters. Depending on the type of algorithm used, there can be many hyperparameters.

Data Visualization and Exploratory Data Analysis

This step involves applying and migrating the model to business operations for their use. In case of AutoML pipeline, ML model can be evaluated with the help of various statistical methods and business rules. However, the results obtained may contribute to the development of commercial products. Patients can withdraw their consent at any time with destruction of all personal data in the biobank . A multi-disciplinary team with clinical, imaging, and technical expertise is recommended.

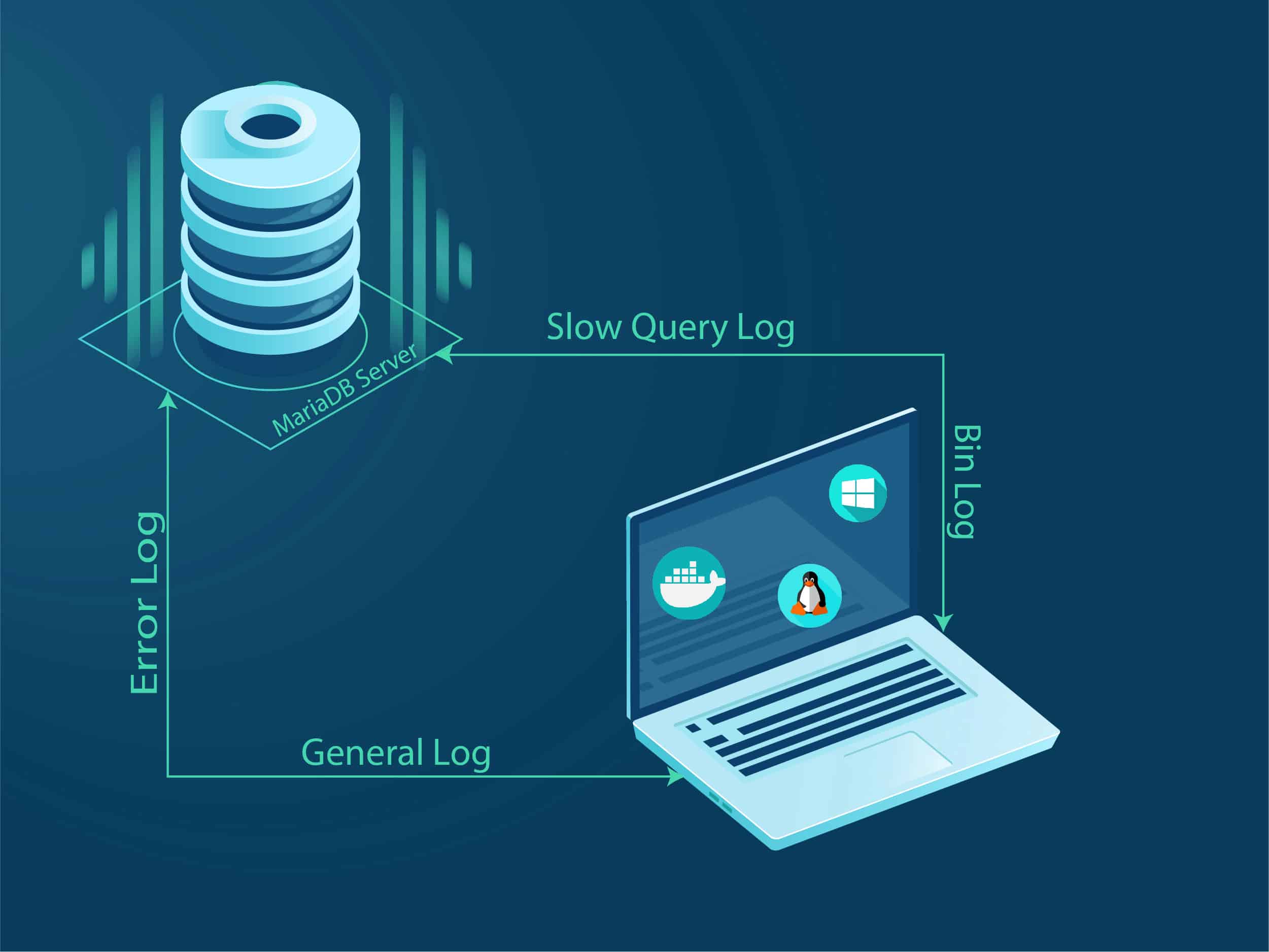

It aids in eradicating any obstacles or friction between IT, data science teams, and DevOps. It takes a significant amount of computation to evaluate a single input using a neural network, let alone manage traffic from many different users. As a result, when deploying a neural network model in a Docker container, it’s important to host the container where it can access powerful computing resources. Cloud platforms like AWS, GCP and Azure are great places to start. These platforms provide flexible hosting services for applications that can scale up to meet changing demand.

What Is Supervised Learning?

The receiver operating characteristic curve illustrates the diagnostic performance at various classification thresholds. The area under the ROC curve is frequently used to compare different algorithms on the same task. To select only a clinically useful range of operation, partial AUC can also be used . Several open-source libraries are available with variable permissive licenses .

- Data Splitting – Splitting the data into training, validation, and test datasets to be used during the core machine learning stages to produce the ML model.

- If we set the train_test_split function’s stratify parameter to our array of labels, the function will compute the proportion of each class, and ensure that this ratio is the same in our training and validation data.

- This allows easier updates and versioning, as long as network architecture remains identical.

- Data Labeling – The operation of the Data Engineering pipeline, where each data point is assigned to a specific category.

Rather, in a successful deep learning project, we often pivot back and forth between different steps, continuously tweaking and debugging our scripts, data, and architectures on our quest for the best performing model. Scikit-learn provides the train_test_split function, which splits our data into training and validation datasets and specifies the size of our validation data.

A Machine Learning Pipeline

It is thus necessary to define if developed solution is intended to be integrated into an existing infrastructure or used as a standalone application. During first phase of deployment, a containerized approach such as that proposed by Docker or Kubernetes may be adopted and web-based applications (REST-API) for subsequent deployment. Deployment refers to the implementation of a locally developed solution to a larger scale, such as at the institution level or within a healthcare network. When training an algorithm for a research project for clinical practice, it is critical to clearly understand the metrics used to evaluate the task performance. Specific metrics are defined for each computer vision task, which may differ from a clinical objective. In this deep learning project, you will find similar images using deep learning and locality sensitive hashing to find customers who are most likely to click on an ad. In order to guarantee optimal performance and governance in connection to business choices or effects, it’s vital to monitor the performance of ML models deployed in production systems.

What are the 3 key steps in machine learning project?

- Data preparation. Exploratory data analysis(EDA), learning about the data you're working with.

- Train model on data( 3 steps: Choose an algorithm, overfit the model, reduce overfitting with regularization) Choosing an algorithms.

- Analysis/Evaluation.

- Serve model (deploying a model)

- Retrain model.

- Machine Learning Tools.

Depending on the size of the dataset, we may be able to directly write our scraped data to raw data files (e.g., .txt or .csv). However, for larger datasets, we sometimes need to store the resulting data in our own databases. For example, suppose you want to predict whether someone will have a heart attack within a year. You have a set of data on previous patients, including age, weight, height, blood pressure, etc. You know whether the previous patients had heart attacks within a year of their measurements. So, the problem is combining all the existing data into a model that can predict whether a new person will have a heart attack within a year. When you see that the performance of the model on the validation data starts degrading, you have achieved overfitting.

In undersampling, we balance our data by throwing out examples from our majority class. Model Serving – The process of addressing the ML model artifact in a production environment. Data Labeling – The operation of the Data Engineering pipeline, where each data point is assigned to a specific category. The Figure below shows the core steps involved in a typical ML workflow. For an example of cost-sensitive evaluation, see Conduct Cost-Sensitive Comparison of Two Classification Models.

Who is father of AI?

Where did the term “Artificial Intelligence” come from? One of the greatest innovators in the field was John McCarthy, widely recognized as the father of Artificial Intelligence due to his astounding contribution in the field of Computer Science and AI.

Regression project to implement logistic regression in python from scratch on streaming app data. Compliance with local and international laws and standards is a vital part of governance. Model auditing and reporting are used to give production models with end-to-end traceability and explainability. The monitor phase monitors and analyzes the ML application that has been deployed. Think of it as comparing the results predicted by the model with the actual vehicle. The deploy module empowers operationalizing the ML models created within the build module. Here, the model execution and behavior are tested in a production or production-like environment to guarantee the vigor and adaptability of the model for production utilization.