Batch Test Your Natural Language Understanding Model

If there are individual utterances that you know ahead of time must get a particular result, then add these to the training data instead. They can also be added to a regression test set to confirm that they are getting the right interpretation. According to Zendesk, tech companies receive more than 2,600 customer support inquiries per month. Using NLU technology, you can sort unstructured data (email, social media, live chat, etc.) by topic, sentiment, and urgency (among others). These tickets can then be routed directly to the relevant agent and prioritized. With text analysis solutions like MonkeyLearn, machines can understand the content of customer support tickets and route them to the correct departments without employees having to open every single ticket.

All of this information forms a training dataset, which you would fine-tune your model using. Each NLU following the intent-utterance model uses slightly different terminology and format of this dataset but follows the same principles. Based on some data or query, an NLG system would fill in the blank, like a game of Mad Libs. But over time, natural language generation systems have evolved with the application of hidden Markov chains, recurrent neural networks, and transformers, enabling more dynamic text generation in real time.

Create annotation sets manually

For example, an NLU might be trained on billions of English phrases ranging from the weather to cooking recipes and everything in between. If you’re building a bank app, distinguishing between credit card and debit cards may be more important than types of pies. To help the NLU model better process financial-related tasks you would send it examples of phrases and tasks you want it to get better at, fine-tuning its performance in those areas.

Surface real-time actionable insights to provides your employees with the tools they need to pull meta-data and patterns from massive troves of data. Natural Language Understanding is a best-of-breed text analytics service that can be integrated into an existing data pipeline that supports 13 languages depending on the feature. Often, annotation inconsistencies nlu models will occur not just between two utterances, but between two sets of utterances. In this case, it may be possible (and indeed preferable) to apply a regular expression. Nuance provides a tool called the Mix Testing Tool (MTT) for running a test set against a deployed NLU model and measuring the accuracy of the set on different metrics.

Natural language understanding applications

This enables text analysis and enables machines to respond to human queries. NLU helps computers to understand human language by understanding, analyzing and interpreting basic speech parts, separately. It enables conversational AI solutions to accurately identify the intent of the user and respond to it. When it comes to conversational AI, the critical point is to understand what the user says or wants to say in both speech and written language. IBM Watson NLP Library for Embed, powered by Intel processors and optimized with Intel software tools, uses deep learning techniques to extract meaning and meta data from unstructured data. IBM Watson® Natural Language Understanding uses deep learning to extract meaning and metadata from unstructured text data.

Businesses use Autopilot to build conversational applications such as messaging bots, interactive voice response (phone IVRs), and voice assistants. Developers only need to design, train, and build a natural language application once to have it work with all existing (and future) channels such as voice, SMS, chat, Messenger, Twitter, WeChat, and Slack. Business applications often rely on NLU to understand what people are saying in both spoken and written language. This data helps virtual assistants and other applications determine a user’s intent and route them to the right task.

LLMs won’t replace NLUs. Here’s why

Because fragments are so popular, Mix has a predefined intent called NO_INTENT that is designed to capture them. NO_INTENT automatically includes all of the entities that have been defined in the model, so that any entity or sequence of entities spoken on their NO_INTENT doesn’t require its own training data. By using a general intent and defining the entities SIZE and MENU_ITEM, the model can learn about these entities across intents, and you don’t need examples containing each entity literal for each relevant intent. By contrast, if the size and menu item are part of the intent, then training examples containing each entity literal will need to exist for each intent.

NLU is branch of natural language processing (NLP), which helps computers understand and interpret human language by breaking down the elemental pieces of speech. While speech recognition captures spoken language in real-time, transcribes it, and returns text, NLU goes beyond recognition to determine a user’s intent. Speech recognition is powered by statistical machine learning methods which add numeric structure to large datasets. In NLU, machine learning models improve over time as they learn to recognize syntax, context, language patterns, unique definitions, sentiment, and intent. Natural language processing works by taking unstructured data and converting it into a structured data format.

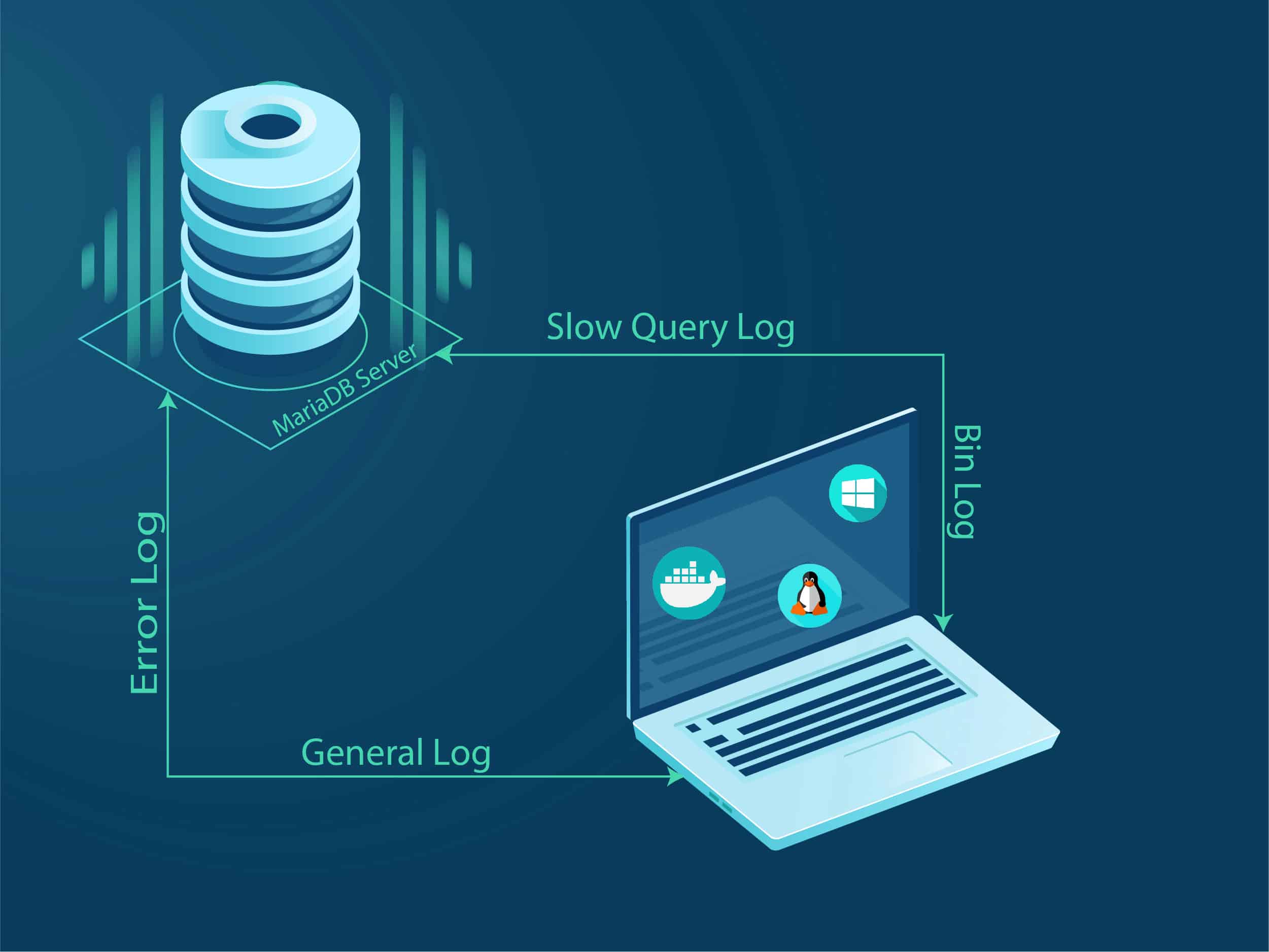

Accessing Diagnostic Data#

NLU helps to improve the quality of clinical care by improving decision support systems and the measurement of patient outcomes. A quick overview of the integration of IBM Watson NLU and accelerators on Intel Xeon-based infrastructure with links to various resources. Accelerate your business growth as an Independent Software Vendor (ISV) by innovating with IBM. Partner with us to deliver enhanced commercial solutions embedded with AI to better address clients’ needs. The Lite plan is perpetual for 30,000 NLU items and one custom model per calendar month. Once you reach the 30,000 NLU items limit in a calendar month, your NLU instance will be suspended and reactivated on the first day of next calendar month.

- Its text analytics service offers insight into categories, concepts, entities, keywords, relationships, sentiment, and syntax from your textual data to help you respond to user needs quickly and efficiently.

- We support a number of different tokenizers, or you can

create your own custom tokenizer. - Train Watson to understand the language of your business and extract customized insights with Watson Knowledge Studio.

- Machine learning policies (like TEDPolicy) can then make a prediction based on the multi-intent even if it does not explicitly appear in any stories.

- Recommendations on Spotify or Netflix, auto-correct and auto-reply, virtual assistants, and automatic email categorization, to name just a few.

- This very rough initial model can serve as a starting base that you can build on for further artificial data generation internally and for external trials.

The greater the capability of NLU models, the better they are in predicting speech context. In fact, one of the factors driving the development of ai chip devices with larger model training sizes is the relationship between the NLU model’s increased computational capacity and effectiveness (e.g GPT-3). Apply natural language processing to discover insights and answers more quickly, improving operational workflows.

NLU Visualized

Build fully-integrated bots, trained within the context of your business, with the intelligence to understand human language and help customers without human oversight. For example, allow customers to dial into a knowledgebase and get the answers they need. We recommend that you configure these options only if you are an advanced TensorFlow user and understand the

implementation of the machine learning components in your pipeline. These options affect how operations are carried

out under the hood in Tensorflow. Spacynlp also provides word embeddings in many different languages,

so you can use this as another alternative, depending on the language of your training data.

Depending on your business, you may need to process data in a number of languages. Having support for many languages other than English will help you be more effective at meeting customer expectations. Using our example, an unsophisticated software tool could respond by showing data for all types of transport, and display timetable information rather than links for purchasing tickets. Without being able to infer intent accurately, the user won’t get the response they’re looking for. Rather than relying on computer language syntax, Natural Language Understanding enables computers to comprehend and respond accurately to the sentiments expressed in natural language text. According to the traditional system there are three steps in natural language understanding.

Get Started with Natural Language Understanding in AI

This document is not meant to provide details about how to create an NLU model using Mix.nlu, since this process is already documented. The idea here is to give a set of best practices for developing more accurate NLU models more quickly. This document is aimed at developers who already have at least a basic familiarity with the Mix.nlu model development process.